Introduction

In recent years, deepfake videos have become one of the biggest challenges on the internet. With the help of artificial intelligence, it is now possible to create videos that look completely real—even when they are fake.

These videos can spread misinformation, damage reputations, and even be used for cybercrime. Because of this, learning how to detect deepfake videos is becoming an essential digital skill for students, professionals, and everyday internet users.Artificial intelligence is a branch of computer science that enables machines to learn from data. According to IBM, AI systems can analyze large datasets and recognize complex patterns.

Artificial intelligence is transforming many industries, especially automation. If you are new to this technology, you can read our guide on AI automation for beginners to understand how automation tools work.

In this guide, you will learn what deepfakes are, how they are created, and the most effective methods to identify them.

How to Detect Deepfake Videos: Step-by-Step Guide

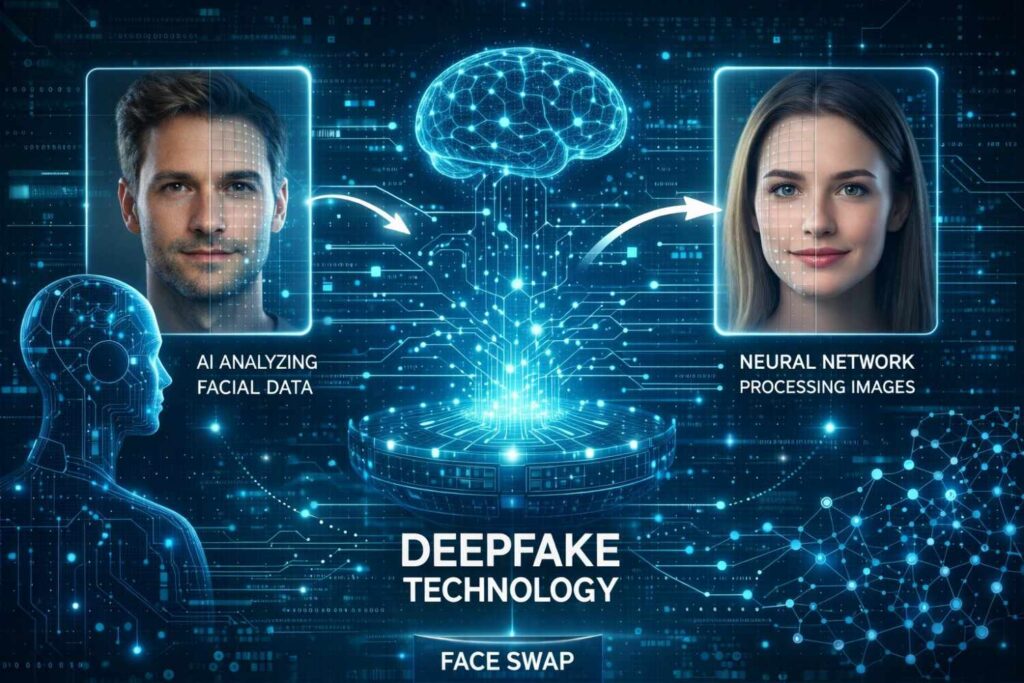

A deepfake video is a video that has been manipulated using artificial intelligence to replace a person’s face, voice, or actions with someone else’s.

The term “deepfake” comes from two words:

- Deep learning (a type of artificial intelligence)

- Fake (something that is not real)

Using deep learning algorithms, AI systems analyze thousands of images and videos of a person and then generate new content that appears realistic. These technologies are part of the broader field of Artificial Intelligence and Machine Learning, which allow computers to learn patterns from large amounts of data.Researchers from MIT explain that deepfake videos are created using deep learning algorithms that generate highly realistic synthetic media.

Why Deepfake Videos Are Dangerous

Deepfake videos can be used in both positive and negative ways. While they can be used in movies and entertainment, they are often misused for harmful purposes.The FBI warns that manipulated videos and online scams are increasing and users should verify suspicious content.

Some common risks include:

- Spreading fake political information

- Creating fake celebrity videos

- Online scams and fraud

- Manipulation of public opinion

- Cybersecurity threats

Because deepfakes are becoming more realistic, detecting them is increasingly important.

Key Tips on How to Detect Deepfake Videos Easily

Although deepfakes are improving, many of them still contain small mistakes. Here are some of the most common signs to look for.

1. Unnatural Facial Movements

Deepfake videos sometimes show unusual facial expressions. The face may look slightly distorted or not perfectly aligned with the head.

Look for:

- Strange blinking patterns

- Odd lip movements

- Facial expressions that don’t match the emotions

2. Poor Lip Synchronization

One of the easiest ways to detect a deepfake is by observing the lip movement.

If the voice does not perfectly match the lip movement, the video may be manipulated.

Signs include:

- Delayed lip movement

- Words not matching mouth shapes

- Audio slightly out of sync

3. Unnatural Eye Blinking

Earlier deepfake systems struggled to reproduce natural eye blinking.

Although newer models are improving, you may still notice:

- Very little blinking

- Too much blinking

Unnatural eye movement

4. Lighting and Shadow Errors

In a deepfake video, the face may not match the lighting conditions of the environment.

Look for:

- Shadows that do not match the surroundings

- Different lighting on the face compared to the background

- Sudden brightness changes around the face

5. Blurry or Distorted Edges Around the Face

Deepfake software sometimes struggles to blend the face perfectly with the body.

You might notice:

- Blurred facial edges

- Pixelated areas around the face

- Skin tone mismatches

These visual inconsistencies can indicate manipulation.

Tools That Help Detect Deepfake Videos

Technology is also being used to fight deepfakes. Several tools and platforms can analyze videos to detect manipulation.

Some examples include:

- Microsoft Video Authenticator

- Deepware Scanner

- Sensity AI

- Intel FakeCatcher

These tools analyze facial movements, pixel patterns, and inconsistencies to determine whether a video is fake.Microsoft has developed AI systems that help identify manipulated media and improve digital trust.

Many companies now rely on specialized services such as an AI automation agency to implement intelligent automation solutions and AI-driven workflows.

Steps to Verify a Suspicious Video

If you suspect a video might be fake, follow these steps.

Step 1: Check the Source

Ask yourself:

- Where did the video come from?

- Is it from a trusted news source?

- Is it verified by credible organizations?

If the source is unknown or suspicious, the video may not be reliable.

Step 2: Perform a Reverse Image Search

Take a screenshot from the video and perform a reverse image search using tools like Google Images.

This helps determine whether the video has appeared somewhere else before.

Step 3: Compare with Original Videos

Search for original videos of the same person.

Compare:

- Voice

- Facial expressions

- Body language

Differences may reveal manipulation.

Step 4: Look for Expert Verification

Many fact-checking websites analyze viral videos and identify deepfakes. Trusted fact-checking sources can confirm whether a video is authentic.

How Artificial Intelligence Detects Deepfakes

Researchers are developing AI systems that can identify fake videos by analyzing patterns invisible to the human eye.

These systems examine:

- Pixel-level inconsistencies

- Biological signals (like heartbeat patterns in videos)

- Facial movement patterns

- Compression artifacts

Ironically, Artificial Intelligence is now being used to fight AI-generated deepfakes.

Tips to Protect Yourself from Deepfake Scams

Here are some practical tips to stay safe online.

- Do not trust viral videos immediately.

- Verify information from multiple sources.

- Be cautious of videos asking for money or personal data.

- Follow credible news organizations.

- Learn basic digital media literacy.

Being cautious online is the best defense against digital manipulation.

The Future of Deepfake Detection

Deepfake technology will continue to evolve as artificial intelligence becomes more powerful.

However, governments, tech companies, and researchers are actively working on solutions to detect and prevent deepfake misuse.

Future detection methods may include:

- AI-powered verification systems

- Blockchain-based media authentication

- Digital watermarks for original videos

These innovations will help create a safer digital environment.As AI technology evolves, automation systems are becoming more advanced. Experts believe the future of AI automation will bring powerful tools that can both create and detect synthetic media.

Conclusion

Deepfake videos are one of the most challenging problems in today’s digital world. With the rapid development of artificial intelligence, distinguishing real videos from fake ones is becoming increasingly difficult.

However, by understanding the warning signs—such as unnatural facial movements, lighting inconsistencies, and poor lip synchronization—you can identify many deepfakes.

Using verification tools, checking reliable sources, and staying informed about digital manipulation techniques can help you protect yourself from misinformation.

As technology evolves, awareness and critical thinking will remain the most powerful tools for detecting deepfake content.

Frequently Asked Questions (FAQ)

What is a deepfake video?

A deepfake video is an AI-generated video in which a person’s face or voice is digitally manipulated to make them appear to say or do something they never actually did.

Can deepfake videos be detected?

Yes. Deepfakes can be detected by analyzing facial movements, lighting inconsistencies, lip synchronization errors, and using specialized AI detection tools.

Why are deepfakes dangerous?

Deepfakes can spread misinformation, damage reputations, influence politics, and enable online scams.

Are deepfakes illegal?

In many countries, malicious use of deepfakes—such as fraud, harassment, or misinformation—can be illegal.